What is Mixed Reality?

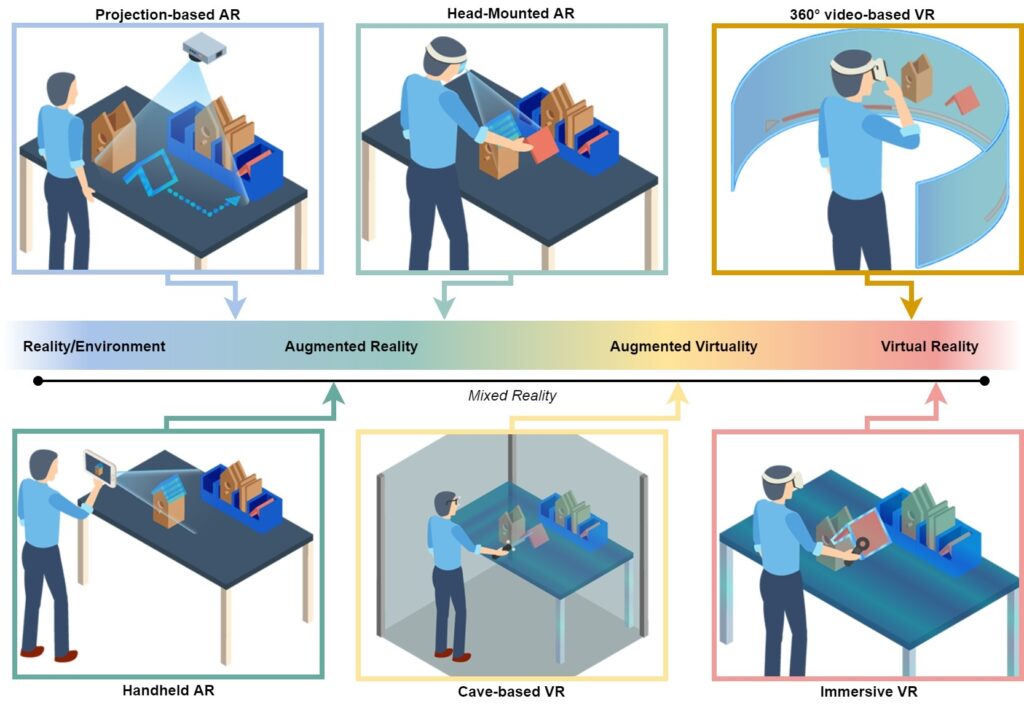

Mixed Reality (MR) is not limited to a single device or technology but is a spectrum, as originally envisioned by Paul Milgram in the Reality-Virtuality Continuum (see the figure to the right). This conceptual framework spans the entire range between the completely real environment and the completely virtual environment. It describes the visual merging of real and virtual worlds to produce new visualizations where physical and digital objects coexist.

Consequently, this continuum encompasses a diverse set of technologies. These range from handheld and head-mounted Augmented Reality (AR) capable of overlaying digital information onto the physical world, to projection-based systems that directly illuminate physical surfaces. Moving further along the spectrum, we encounter Augmented Virtuality, which integrates real-world elements such as video feeds or 360-degree camera captures into virtual spaces. Finally, the continuum reaches fully immersive Virtual Reality (VR) environments experienced through closed head-mounted displays or room-sized CAVE installations.

Our Research Focus

We approach Mixed Reality from a Human-Computer Interaction (HCI) perspective. We don’t just build applications; we engineer the underlying methods that make them effective, accessible, and measurable. A primary pillar of our work is the development of Adaptive Training Systems and intelligent agents that adapt to the user. For instance, in ASTRA, we investigate anthropomorphic AI agents for soft skill development, while TwinMaP utilizes digital twins to create adaptive work instructions that evolve based on employee feedback. Similarly, Sidekick focuses on accessible digital assistance for sheltered workplaces.

A core mission of our group is removing “programming barriers” through authoring tools for immersive media. We build open-source authoring tools that empower domain experts to create their own immersive content without writing code. This includes visual scripting for handheld AR in TrainAR, intuitive VR editors for nursing education in ViRDiPA, and mobile training scenarios for midwifery in Heb@AR. By integrating eye-tracking and physiological sensors in projects like ReqET, and developing dedicated analysis platforms like SUS.tools, we try to scientifically quantify “cognitive load” and usability and validate the effectiveness of our training scenarios across the board.

Infrastructure & Equipment

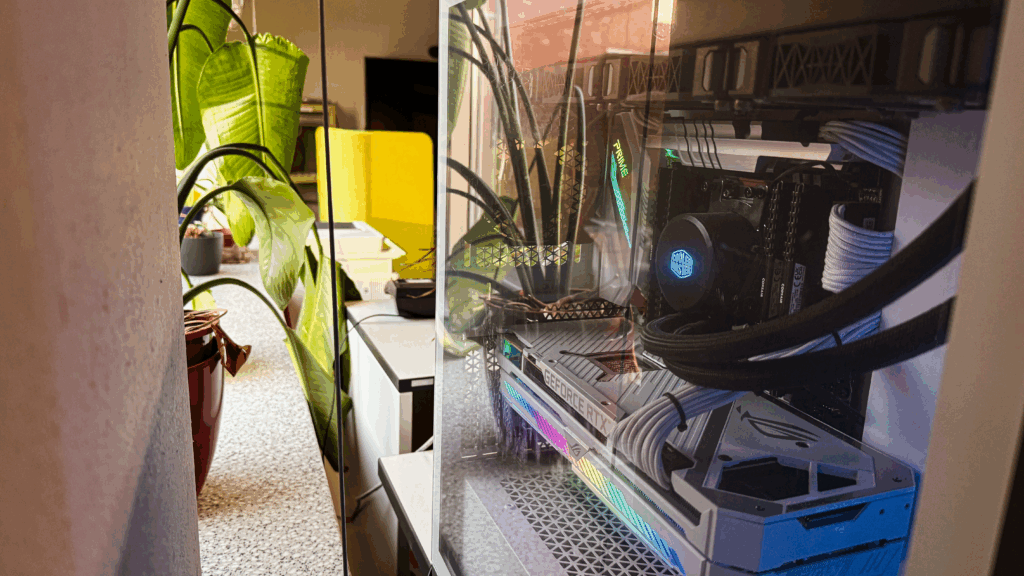

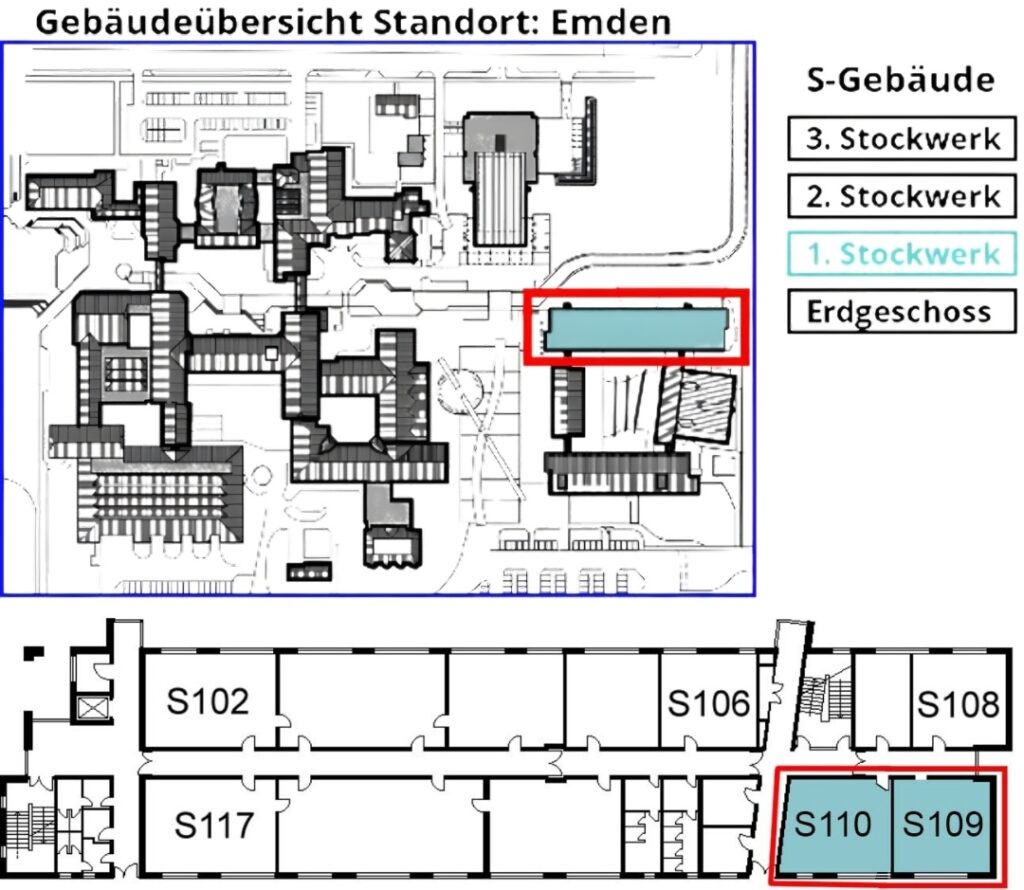

The Mixality Lab, located in Room S110, serves as our central hub for projects, reseach and practical teaching lectures. To support robust research, we maintain a diverse inventory spanning the entire technological spectrum. This includes high-fidelity HMDs (like the Varjo XR-3) and consumer VR/AR headsets (e.g. Meta Quests, HoloLens, Magic Leaps), alongside standard smartphones and tablets for scalable deployments. Additionally, we utilize 3D scanning technology, 360-degree cameras, and green screen installations to capture immersive content. Complementing these production tools, we employ mobile biosignal sensors (e.g., Tobii Eye-Trackers) for reseach studies.

Navigation

You can find us at the Emden Campus (Constantiapl. 4, 26723 Emden). We are located in Building 7 (Faculty of Technology / Electrical Engineering & Informatics). Proceed to the first floor (Level 1); our main staff office is in room S109, and the lab facilities are right next door in S110.

Technical Support

If you require assistance with software installations, have issues with lab equipment, or need support with technical administrative inquiries, please direct your questions to our Lab Admin:

Jannik Franssen, M.Eng.

Call: +49 4921 8071877

Email: jannik.franssen@hs-emden-leer.de